I'm curious if you have any thoughts on the effect regulations will have on AI timelines. To have a transformative effect, AI would likely need to automate many forms of management, which involves making a large variety of decisions without the approval of other humans. The obvious effect of deploying these technologies will therefore be to radically upend our society and way of life, taking control away from humans and putting it in the hands of almost alien decision-makers. Will bureaucrats, politicians, voters, and ethics committees simply stand idly by while the tech industry takes over our civilization like this?

On the one hand, it is true that cars, airplanes, electricity, and computers were all introduced with relatively few regulations. These technologies went on to change our lives greatly in the last century and a half. On the other hand, nuclear power, human cloning, genetic engineering of humans, and military weapons each have a comparable potential to change our lives, and yet are subject to tight regulations, both formally, as the result of government-enforced laws, and informally, as engineers regularly refuse to work on these technologies indiscriminately, fearing backlash from the public.

One objection is that it is too difficult to slow down AI progress. I don't buy this argument.

A central assumption of the Bio Anchors model, and all hardware-based models of AI progress more generally, is that getting access to large amounts of computation is a key constraint to AI development. Semiconductor fabrication plants are easily controllable by national governments and require multi-billion dollar upfront investments, which can hardly evade the oversight of a dedicated international task force.

We saw in 2020 that, if threats are big enough, governments have no problem taking unprecedented action, quickly enacting sweeping regulations of our social and business life. If anything, a global limit on manufacturing a particular technology enjoys even more precedent than, for example, locking down over half of the world's population under some sort of stay-at-home order.

Another argument states that the incentives to make fast AI progress are simply too strong: first mover advantages dictate that anyone who creates AGI will take over the world. Therefore, we should expect investments to accelerate dramatically, not slow down, as we approach AGI. This argument has some merit, and I find it relatively plausible. At the same time, it relies on a very pessimistic view of international coordination that I find questionable. A similar first-mover advantage was also observed for nuclear weapons, prompting Bertrand Russell to go as far as saying that only a world government could possibly deter nations from developing and using nuclear weapons. Yet, I do not think this prediction was borne out.

Finally, it is possible that the timeline you state here is conditioned on no coordinated slowdowns. I sometimes see people making this assumption explicit, and in your report you state that you did not attempt to model "the possibility of exogenous events halting the normal progress of AI research". At the same time, if regulation ends up mattering a lot -- say, it delays progress by 20 years -- then all the conditional timelines will look pretty bad in hindsight, as they will have ended up omitting one of the biggest, most determinative factors of all. (Of course, it's not misleading if you just state upfront that it's a conditional prediction).

Thanks so much for this update! Some quick questions:

- Are you still estimating that the transformative model uses probably about 1e16 parameters & 1e16 flops? IMO something more like 1e13 is more reasonable.

- Are you still estimating that algorithmic efficiency doubles every 2.5 years (for now at least, until R&D acceleration kicks in?) I've heard from thers (e.g. Jaime Sevilla) that more recent data suggests it's doubling every 1 year currently.

- Do you still update against the lower end of training FLOP requirements, on the grounds that if we were 1-4 OOMs away right now the world would look very different?

- Is there an updated spreadsheet we can play around with?

Great post!

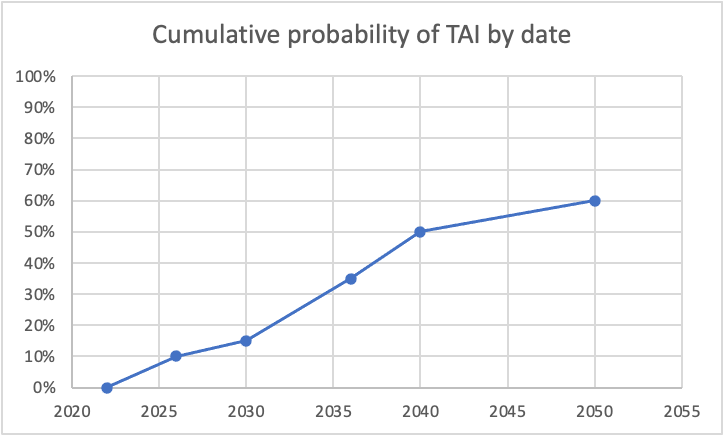

I was curious what some of this looked like, so I graphed it, using the dates you specifically called out probabilities. For simplicity, I assumed constant probability within each range (though I know you said this doesn't correspond to your actual views). Here's what I got for cumulative probability:

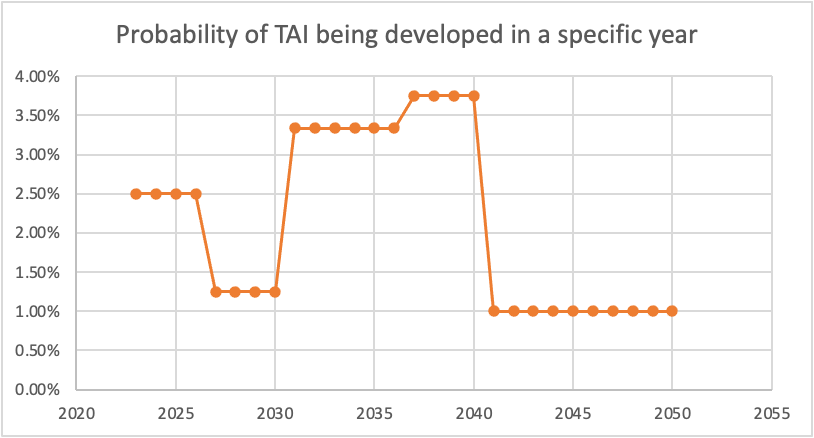

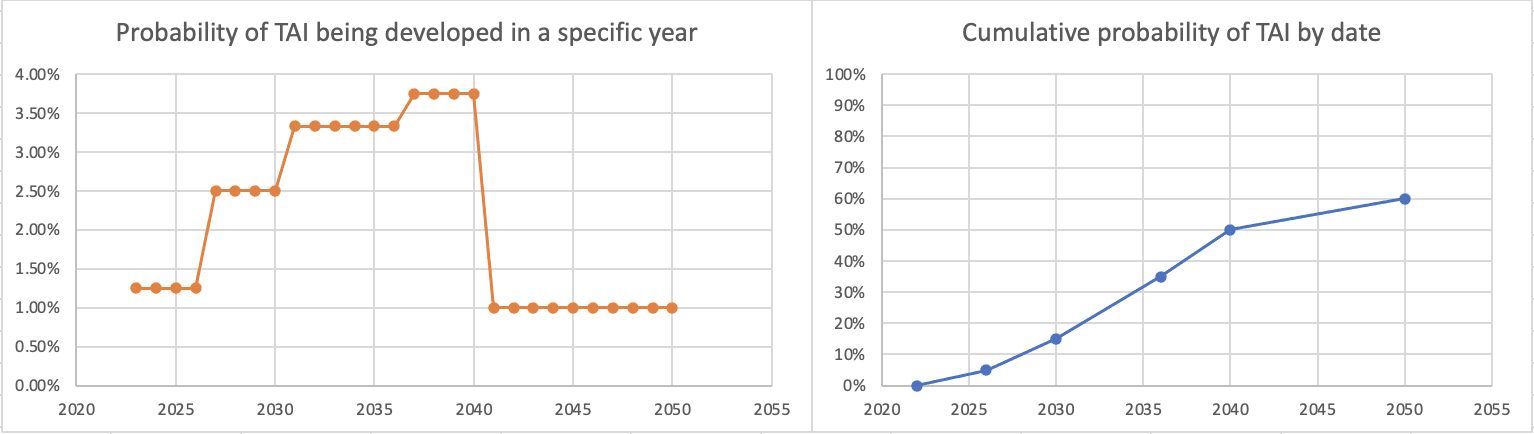

And here's the corresponding probabilities of TAI being developed per specific year:

The dip between 2026 and 2030 seems unjustified to me. (I also think the huge drop from 2040-2050 is too aggressive, as even if we expect a plateauing of compute/another AI winter/etc, I don't think we can be super confident exactly when that would happen, but this drop seems more defensible to me than the one in the late 2020s.)

If we instead put 5% for 2026, here's what we get:

which seems more intuitively defensible to me. I think this difference may be important, as even shift of small numbers of years like this could be action-relevant when we're talking about very short timelines (of course, you could also get something reasonable-seeming by shifting up the probabilities of TAI in the 2026-2030 range).

I'd also like to point out that your probabilities would imply that if TAI is not developed by 2036, there would be an implied 23% conditional chance of it then being developed in the subsequent 4 years ((50%-35%)/(100%-35%)), which also strikes me as quite high from where we're now standing.

Now I’m inclined to think that just automating most of the tasks in ML research and engineering -- enough to accelerate the pace of AI progress manyfold -- is sufficient.

This seems to assume that human labor is currently the limiting bottleneck in AI research, and by a large multiplicative factor.

That doesn't seem likely to me. Compute is a nontrivial bottleneck even in many small-scale experiments, and in particular is a major bottleneck for research that pushes the envelope of scale, which is generally how new SOTA results and such get made these days.

To be concrete, consider this discussion of "the pace of AI progress" elsewhere in the post:

But progress on some not-cherry-picked benchmarks was notably faster than what forecasters predicted, so that should be some update toward shorter timelines for me.

That post is about four benchmarks. Of the four, it's mostly MATH and MMLU that are driving the sense of "notably faster progress" here. The SOTAs for these were established by

- MATH: Minerva, which used a finetuned PaLM-540B model together with already existing (if, in some cases, relatively recently introduced) techniques like chain-of-thought

- MMLU: Chinchilla, a model with the same design and (large) training compute cost as the earlier Gopher, but with different hyperparameters chosen through a conventional (if unusually careful) scaling law analysis

In both cases, relatively simple and mostly non-original techniques were combined with massive compute. Even if you remove the humans entirely, the computers still only go as far as they go.

(Human labor is definitely a bottleneck in making the computers go faster -- like hardware development, but also specialized algorithms for large-scale training. But this is a much more specialized area than "AI research" generally, so there's less available pretraining data on it -- especially since a large[r] fraction of this kind of work is likely to be private IP.)

Good post!

I understand that the specific numbers in this post are "rough" and "volatile," but I want to note that 35% by 2036, 50% by 2040, and 60% by 2050 means 3.75% per year 2036–2040 and 1% per year 2040–2050, which is a surprisingly steep drop-off. Or as an alternative framing, conditional on TAI not having appeared by 2040, my expected credence in 2040 that TAI appears in the next 10 years is much greater than 20% (where 20% is your implied probability of TAI between 2040 and 2050, conditional on no TAI in 2040). My median timeline is somewhat shorter than yours, but my credence in TAI by 2050 is substantially higher.

(That said, I lack something like the knowledge, courage, or epistemic virtue to be more explicit about my timelines, because it's hard; strong-upvote for this useful and virtuous post, and thanks for using specific numbers so much.)

Hm, yeah, I bet if I reflected more things would shift around, but I'm not sure the fact that there's a shortish period where the per-year probability is very elevated followed by a longer period with lower per-year probability is actually a bad sign.

Roughly speaking, right now we're in an AI boom where spending on compute for training big models is going up rapidly, and it's fairly easy to actually increase spending quickly because the current levels are low. There's some chance of transformative AI in the middle of this spending boom -- and because resource inputs are going up a ton each year, the probability of TAI by date X would also be increasing pretty rapidly.

But the current spending boom is pretty unsustainable if it doesn't lead to TAI. At some point in the 2040s or 50s, if we haven't gotten transformative AI by then, we'll have been spending 10s of billions training models, and it won't be that easy to keep ramping up quickly from there. And then because the input growth will have slowed, the increase in probability from one year to the next will also slow. (That said, not sure how this works out exactly.)

(+1. I totally agree that input growth will slow sometime if we don't get TAI soon. I just think you have to be pretty sure that it slows right around 2040 to have the specific numbers you mention, and smoothing out when it will slow down due to that uncertainty gives a smoother probability distribution for TAI.)

Thanks for the update, Ajeya! I found the details here super interesting.

I already thought that timelines disagreements within EA weren't very cruxy, and this is another small update in that direction: I see you and various MIRI people and Metaculans give very different arguments about how to think about timelines, and then the actual median year I tend to hear is quite similar.

(And also, all of the stated arguments on all sides continue to seem weak/inconclusive to me! So IMO there's not much disagreement, and it would be very easy for all of us to be wrong simultaneously. My intuition is that it would be genuinely weird if AGI is much more than 70 years away, but not particularly weird if it's 1 year away, 10 years away, 60 years away, etc.)

I think the main value of in-depth timelines research and debate has been that it reveals disagreements about other topics (background views about ML, forecasting methodology, etc.).

Yeah I agree more of the value of this kind of exercise (at least within the community) is in revealing more granular disagreements about various things. But I do think there's value in establishing to more external people something high level like "It really could be soon and it's not crazy or sci fi to think so."

I don’t expect a discontinuous jump in AI systems’ generality or depth of thought from stumbling upon a deep core of intelligence

I felt surprised reading this, since "ability to automate AI development" feels to me like a central example of a "deep core of intelligence"—i.e., of a cognitive ability which makes attaining many other cognitive abilities far easier. Does it not feel like a central example to you?

I don't see it that way, no. Today's coding models can help automate some parts of the ML researcher workflow a little bit, and I think tomorrow's coding models will automate more and more complex parts, and so on. I think this expansion could be pretty rapid, but I don't think it'll look like "not much going on until something snaps into place."

As a result, my timelines have also concentrated more around a somewhat narrower band of years. Previously, my probability increased from 10% to 60% over the course of the ~32 years from ~2032 and ~2064; now this happens over the ~24 years between ~2026 and ~2050.

10% probability by 2026 (!!)

Huh, I claim Ajeya's timelines are much more coherent if we replace 2026 with 2027.5 or 2028.* 10% between now and 2026, then 5% between 2026 and 2030, then 20% between 2030 and 2036 is really weird.

*Changing 2026 (rather than 2030) just because Ajeya's 2026 cumulative probability seems less considered than her 2030 and 2036 cumulative probabilities.

(Coherence aside, when I now look at that number it does seem a bit too high, and I feel tempted to move it to 2027-2028, but I dunno, that kind of intuition is likely to change quickly from day to day.)

But in my report I arrive at a forecast by fixing a model size based on estimates of brain computation, and then using scaling laws to estimate how much data is required to train a model of that size. The update from Chinchilla is then that we need more data than I might have thought.

I'm confused by this argument. The old GPT-3 scaling law is still correct, just not compute-optimal. If someone wanted to, they could still go on using the old scaling law. So discovering better scaling can only lead to an update towards shorter timelines, right?

(Except if you had expected even better scaling laws by now, but it didn't sound like that was your argument?)

If you assume the human brain was trained roughly optimally, then requiring more data, at a given parameter number, to be optimal pushes timelines out. If instead you had a specific loss number in mind, then a more efficient scaling law would pull timelines in.

Gotcha, this makes sense to me now, given the assumption that to get AGI we need to train a P-parameter model on the optimal scaling, where P is fixed. Thanks!

...though now I'm confused about why we would assume that. Surely that assumption is wrong?

- Humans are very constrained in terms of brain size and data, so we shouldn't assume that these quantities are scaled optimally in some sense that generalizes to deep learning models.

- Anyhow we don't need to guess the amount of data the human brain needs: we can just estimate it directly, just like we estimate brain-parameter count.

To move to a more general complaint about the bio anchors paradigm: it never made much sense to assume that current scaling laws would hold; clearly scaling will change once we train on new data modalities; we know that human brains have totally different scaling laws than DL models; and an AGI architecture will again have different scaling laws. Going with the GPT-3 scaling law is a very shaky best guess.

So it seems weird to me to put so much weight on this particular estimate, such that someone figuring out how to scale models much more cheaply would update one in the direction of longer timelines! Surely the bio anchor assumptions cannot possibly be strong enough to outweigh the commonsense update of 'whoa, we can scale much more quickly now'?

The only way that update makes sense is if you actually rely mostly on bio anchors to estimate timelines (rather than taking bio anchors to be a loose prior, and update off the current state and rate of progress in ML), which seems very wrong to me.

You mention that you're surprised to have not seen "more vigorous commercialization of language models" recently beyond mere "novelty". Can you say more about what particular applications you had in mind? Also, do you consider AI companionship as useful or merely novel?

I expect the first killer app that goes mainstream will mark the PONR, i.e. the final test of whether the market prefers capabilities or safety.

Can you say more about what particular applications you had in mind?

Stuff like personal assistants who write emails / do simple shopping, coding assistants that people are more excited about than they seem to be about Codex, etc.

(Like I said in the main post, I'm not totally sure what PONR refers to, but don't think I agree that the first lucrative application marks a PONR -- seems like there are a bunch of things you can do after that point, including but not limited to alignment research.)

I think your timeline is on point regarding capabilities. However, I do not entirely follow the jump from expert-level programming and brute-force search to an "explosive feedback loop of AI progress". You point out that there is a "clear-cut search space" in machine learning, which is true, and I agree that brute-force search could be expected to yield some progress, likely substantial progress, whereas in other scientific disciplines similar progress would be unlikely. I will even concede that explosive progress is possible, but I fail to grasp why it is likely. I think that the "clear-cut search space" is limited to low-hanging fruit, such as "different small tweaks to architectures, loss functions, optimization algorithms", and I expect that to get from automated AI progress to automated scientific discovery something more is needed. If you're suggesting that efficiency improvements from "different small tweaks to architectures, loss functions, optimization algorithms" would be of an order of magnitude or greater—enough to move progressively on to medium and longer horizon models—is there evidence in this post or the original report to support this that I am missing? This could plausibly lead to an "explosive feedback loop of AI progress", but I would not assume that it will. Alternatively, it seems plausible that "directly writing learning algorithms much more sample-efficient than SGD" would be sufficient to get to automated scientific discovery—are you suggesting that "different small tweaks to architectures, loss functions, optimization algorithms" is going to be enough to generate a novel learning algorithm? The search space for that seems "less clear-cut" and much more like what would be required to automate progress in other scientific disciplines.

I worked on my draft report on biological anchors for forecasting AI timelines mainly between ~May 2019 (three months after the release of GPT-2) and ~Jul 2020 (a month after the release of GPT-3), and posted it on LessWrong in Sep 2020 after an internal review process. At the time, my bottom line estimates from the bio anchors modeling exercise were:[1]

These were roughly close to my all-things-considered probabilities at the time, as other salient analytical frames on timelines didn’t do much to push back on this view. (Though my subjective probabilities bounced around quite a lot around these values and if you’d asked me on different days and with different framings I’d have given meaningfully different numbers.)

It’s been about two years since the bulk of the work on that report was completed, during which I’ve mainly been thinking about AI. In that time it feels like very short timelines have become a lot more common and salient on LessWrong and in at least some parts of the ML community.

My personal timelines have also gotten considerably shorter over this period. I now expect something roughly like this:

As a result, my timelines have also concentrated more around a somewhat narrower band of years. Previously, my probability increased from 10% to 60%[4] over the course of the ~32 years from ~2032 and ~2064; now this happens over the ~24 years between ~2026 and ~2050.

I expect these numbers to be pretty volatile too, and (as I did when writing bio anchors) I find it pretty fraught and stressful to decide on how to weigh various perspectives and considerations. I wouldn’t be surprised by significant movements.

In this post, I’ll discuss:

This post is a catalog of fairly gradual changes to my thinking over the last two years; I'm not writing this post in response to an especially sharp change in my view -- I just thought it was a good time to take stock, particularly since a couple of people have asked me about my views recently.

Updates that push toward shorter timelines

I list the main updates toward shorter timelines below roughly in order of importance; there are some updates toward longer timelines as well (discussed in the next section) which claw back some of the impact of these points.

Picturing a more specific and somewhat lower bar for TAI

Thanks to Carl Shulman, Paul Christiano, and others for discussion around this point.

When writing my report, I was imagining that a transformative model would likely need to be able to do almost all the tasks that remote human workers can do (especially the scientific research-related tasks). Now I’m inclined to think that just automating most of the tasks in ML research and engineering -- enough to accelerate the pace of AI progress manyfold -- is sufficient.

Roughly, my previous picture (similar to what Holden describes here) was:

Automate science → Way more scientists → Explosive feedback loop of technological progress

But if it’s possible to automate science with AI, then automating AI development itself seems like it would make the world crazy almost as quickly:

Automate AI R&D → Explosive feedback loop of AI progress specifically → Much better AIs that can now automate science (and more) → explosive feedback loop of technological progress

To oversimplify, suppose I previously thought it would take the human AI development field ~10 years of work from some point T to figure out how to train a scientist-AI. If I learn they magically got access to AI-developer-AIs that accelerate progress in the field by 10x, I should now think that it will take the field ~1 year from point T to get to a scientist-AI.

This feels like a lower bar for TAI than what I was previously picturing -- the most obvious reason is because automating one field should be easier than automating all fields, meaning that the model size required should be appreciably smaller. But additionally, I think AI development in particular seems like it has properties that make it easier to automate with only short horizon training (see below for some discussion). So this update reduces my estimate of both model size and effective horizon length.

Feeling like meta-learning may be unnecessary, making short horizon training seem more plausible

Thanks to Carl Shulman, Dan Kokotajlo, and others for discussion of what short horizon training could look like.

In my report, I acknowledged that models trained with short effective horizon lengths could probably do a lot of economically useful work, and that breaking long tasks down into smaller pieces could help a lot. The main candidate in my mind for a task that might require long training horizons to learn was (and still is) “efficient learning” itself.

That is, I thought that a meta-learning project attempting to train a model on many instances of the task “master some complex new skill (that would take a human a long time to learn, e.g. a hard video game or a new type of math) from scratch within the current episode and then apply it” would have a long effective horizon length, since each individual learning task (each “data point”) would take the model some time to complete. Absent clever tricks, my best guess was that this kind of meta-learning run would have an effective horizon length roughly similar to the length of time it would take for a human to learn the average skill in the distribution.

I felt like having the ability to learn novel skills in a sample-efficient way would be important for a model to have a transformative impact, and was unsure about the extent to which clever tricks could make things cheaper than the naive view of “train the model on a large number of examples of trying to learn a complex task over many timesteps.” This pulled my estimate for effective horizon length upwards (to a median of multiple subjective hours).

By and large, I haven’t really seen much evidence in the last two years that this kind of meta-learning -- where each object-level task being learned would take a human a long time to learn -- can be trained much more cheaply than I thought, or much evidence that ML can directly achieve human-like sample efficiencies without the need for expensive meta-learning (footnote attempts to briefly address some possible objections here).[5]

Instead, as I’ve thought harder about the bar for “transformative,” I’ve come to think that it’s likely not necessary for the first transformative models to learn new things super efficiently. Specifically, if the main thing needed to have a transformative impact is to accelerate AI development itself:

I do still think that eventually AI systems will learn totally new skills much more efficiently than existing ML systems do, whether that happens through meta-learning or through directly writing learning algorithms much more sample-efficient than SGD. But now this seems likely to come after short-horizon, inefficiently-trained coding models operating pretty close to their training distributions have massively accelerated AI research.

Explicitly breaking out “GPT-N” as an anchor

This change is mainly a matter of explicitly modeling something I’d thought of but found less salient at the time; it felt more important to me to factor it in after the lower bar for TAI made me consider short horizons in general more likely.

My original Short Horizon neural network anchor assumed that effective horizon length would be log-uniformly distributed between ~1 subjective second (which is about GPT-3 level) and ~1000 subjective seconds; this meant that the Short Horizon anchor was assuming an effective horizon length substantially longer than a pure language model (the mean was ~32 subjective seconds, vs ~1 subjective second for a language model).

I’m now explicitly putting significant weight on an amount of compute that’s more like “just scaling up language models to brain-ish sizes.” (Note that this hypothesis/anchor is just saying that the training computation is very similar to the amount of computation needed to train GPT-N, not that we’d literally do nothing else besides train a predictive language model. For example, it’s consistent with doing RL fine-tuning but just needing many OOMs less data for that than for the original training run -- and I think that’s the most likely way it would manifest.)

Considering endogeneities in spending and research progress

Thanks to Carl Shulman for raising this point, and to Tom Davidson for research fleshing it out.

My report forecasted algorithmic progress (the FLOP required to train a transformative model in year Y), hardware progress (FLOP / $ in year Y), and willingness to spend ($ that the largest training run could spend on FLOP in year Y) as simple trendline forecasts, which I didn’t put very much thought into.

In the open questions section, I gestured at various ways these forecasts could be improved. One salient improvement (mentioned but not highlighted very much) would be to switch from a black box trend extrapolation to a model that takes into account how progress in R&D relates to R&D investment.

That is, rather than saying “Progress in [hardware/software] has been [X doublings per year] recently, so let’s assume it continues that way,” we could say:

This would then allow us to express beliefs about how investment in R&D will change, which can then translate into beliefs about how fast research will progress. And if ML systems have lucrative near-term applications, then it seems likely there will be demand for increasing investment into hardware and software R&D beyond the historical trend, suggesting that this progress should happen faster than I model.

Furthermore, it seems possible that pre-transformative systems would substantially automate some parts of AI research itself, potentially further increasing the effective “total R&D efforts gone into AI research” beyond what might be realistic from increasing the human labor force alone.

Seeing continued progress and no major counterexamples to DL scaling well

My timelines model assumed that there was a large (80%) chance that scaling up 2020 ML techniques to use some large-but-not-astronomically-large amount of computation (and commensurate amount of data) would work for producing a transformative model.[7]

Over the last two years, I’d say deep learning has broadly continued to scale up well. Since that was the default assumption of my model, there isn’t a big update toward shorter timelines here -- but there was some opportunity for deep learning to “hit a wall” over the last two years, and that didn’t really happen, modestly increasing my confidence in the premise.

Seeing some cases of surprisingly fast progress

My forecasting method was pretty anchored to estimates of brain computation, rather than observations of the impressiveness of models that existed at the time, so I was pretty unsure what the framework would imply for very-near-term progress[8] (“How good at coding would a mouse be, if it’d been bred over millennia to write code instead of be a mouse?”).

As a result, I didn’t closely track specific capabilities advances over the last two years; I’d have probably deferred to superforecasters and the like about the timescales for particular near-term achievements. But progress on some not-cherry-picked benchmarks was notably faster than what forecasters predicted, so that should be some update toward shorter timelines for me. I’m pretty unsure how much, and it’s possible this should be larger than I think now.

Making a one-time upward adjustment for “2020 FLOP / $”

In my report I estimated that the effective computation per dollar in 2020 was 1e17 FLOP/ $, and projected this forward to get hardware estimates for future years. However, this seems to have been an underestimate of FLOP/ $) as of 2020. This is because:

This means the 2020 start point should have been 2.5 * 2.5 * 1.5 = nearly 10x larger. From the 2020 start point, I projected that FLOP / $ would double every ~2.5 years -- which is slightly faster than the 2010 to 2018 period but slightly slower than Moore’s law. I haven't looked into it deeply but my understanding is that this has roughly held, so the update I’m making here is a one-time increase to the starting point rather than a change in rate (separate from the changes in rate I’m imagining due to the endogeneities update).

Updates that push toward longer timelines

Overall, the updates in the previous section seem a lot stronger than these updates.

Claims associated with short timelines that I still don’t buy

Sources of bias I’m not sure what to do with

Putting numbers on timelines is in general a kind of insane and stressful exercise, and the most robust thing I’ve taken away from thinking about all this is something like “It’s really a real, live possibility that the world as we know it is radically upended soon, soon enough that it should matter to all of us on normal planning horizons.” A large source of variance in stated numbers is messy psychological stuff.

The most important bias that suggests I’m not updating hard enough toward short timelines is that I face sluggish updating incentives in this situation -- bigger changes to my original beliefs will make people update harder against my reasonableness in the past, and holding out some hope that my original views were right after all could be the way to maximize social credit (on my unconscious calculation of how social credit works).

But there are forces in the other direction too -- most of my social group is pretty system 1 bought into short timelines,[13] which for many of us likely emotionally justifies our choice to be all-in on AI risk with our careers. My own choices since 2020 look even better on my new views than my old. I have constantly heard criticism over the last two years that my timelines are too long, and very little criticism that they were too short, even though almost everyone in the world (including economists, ML people, etc) would probably have the other view. I find myself not as interested or curious as I should theoretically be (on certain models of epistemic virtue) in pushback from such people. I spend most of my time visualizing concrete worlds in which things move fast and hardly any time visualizing concrete worlds where they move slow.[14]

What does this mean?

I’m unclear how decision-relevant bouncing around within the range I’ve been bouncing around is. Given my particular skills and resources, I’ve steered my career over the last couple years in a direction that looks roughly as good or better on shorter timelines than what I had in 2020.

This update should also theoretically translate into a belief that we should allocate more money to AI risk over other areas such as bio risk, but this doesn’t in fact bind us since even on our previous views, we would have liked to spend more but were more limited by a lack of capacity for seeking out and evaluating possible grants than by pure money.

Probably the biggest behavioral impact for me (and a lot of people who’ve updated toward shorter timelines in the last few years) will be to be more forceful and less sheepish about expressing urgency when e.g. trying to recruit particular people to work on AI safety or policy.

The biggest strategic update that I’m reflecting on now is the prospect of making a lot of extremely fast progress in alignment with comparatively limited / uncreative / short-timescale systems in some period a few months or a year before systems that are agentic / creative enough to take over the world. I’m not sure how realistic this is, but reflecting on how much progress could be made with pretty “dumb” systems makes me want to game out this possibility more.

Bio Anchors part 4 of 4, pg 14-16. ↩︎

A year chosen to evaluate a claim made by Holden in 2016 that there was a >10% chance of TAI within 20 years. ↩︎

Given by the ratio of odds ratios: (0.35 / 0.65) / (0.15 / 0.85) = 3.05. This implies my observations and logical updates from thinking more since 2020 were 3x more likely in a world where TAI happens by 2036 than in a world where it doesn’t happen by 2036. ↩︎

This contains 50% of my probability mass but is not a “50% confidence interval” as the term is normally used, because the range I’m considering is not the range from 25% probability to 75% probability. This is mainly to keep the focus on the left tail of the distribution, which is more important and easier to think about. E.g. if I’m wrong about most of the models that lead me to expect TAI soonish, then my probability climbs very slowly up to 75% since I would revert back to simple priors. ↩︎

People use “few-shot learning” to refer to language models’ ability to understand the pattern of what they’re being asked for after seeing a small number of examples in the prompt (e.g. after seeing a couple of examples of translating an English sentence into French, a model will complete the pattern and translate the next English sentence into French). However, this doesn’t seem like much evidence about the kind of meta-learning I’m interested in, because it takes very little time for a human to learn a pattern like that. If a bilingual French-and-English-speaking human saw a context with two examples of translating an English sentence into French, they would ~immediately understand what was going on. Since the model already knows English and French, the learning problem it faces is very short-horizon (the amount of time it would take a human to read the text). I haven’t yet seen evidence that language models can be taught new skills they definitely didn’t know already over the course of many rounds of back-and-forth.

I’ve also seen EfficientZero cited as evidence that SGD itself can reach human-level sample complexities (without the need for explicit meta-learning), but this doesn’t seem right to me. The EfficientZero model learned the environment dynamics of a game with ML and then performed a search against that model of the environment to play the game. It took the model a couple of hours per game to learn environment dynamic facts like “the paddle moves left to right”, “if an enemy hits you you die,” etc. The relevant comparison is not how much time it would take a human to learn to play the game, it’s how much time it would take a human to get what’s going on and what they’re supposed to aim for -- and the latter is not something that it would take a human 2 hours of watching to figure out (probably more like 15-60 seconds).

The kind of thing that would seem like evidence about efficient meta-learning is something like “A model is somehow trained on a number of different video games, and then is able to learn how to play (not just model the dynamics of) a new video game it hadn’t seen before decently with a few hours of experience.” The kind of thing that would seem like evidence of human-like sample efficiency directly from SGD would be something like “good performance on language tasks while training on only as many words as a human sees in a lifetime.” ↩︎

All the publicly-available code online (e.g. GitHub), plus company-internal repos, keylogging of software engineers, explicitly constructed curricula/datasets (including datasets automatically generated from the outputs of slightly smaller coding models), etc. Also, it seems like most of the reasoning that went into generating the code is in some sense manifested in the code itself, whereas e.g. the thinking and experimentation that went into a biology experiment isn’t all directly present in the resulting paper. ↩︎

Row 17 in sheet “Main” ↩︎

The key exception, as discussed above, was predicting that cheap meta-learning wouldn’t happen, which I’d say it didn’t. ↩︎

Note that there’s a bunch of already-widely-used techniques (most notably search) that some people wouldn’t count as “pure deep learning” which I expect to continue to play an important role. Transformative AI seems quite likely to look like AlphaGo (which uses search), RETRO (which uses retrieval), etc. ↩︎

Though my probability on this necessarily increased some because shifting the distribution to the left has to increase probability mass on “surprisingly cheap,” it’s still not my default. If I had to guess I’d say maybe ~15% chance on <$10B for training TAI and a similar probability that it’s trained by a company with <2% of the valuation of the biggest tech companies. Betting on this possibility -- with one’s career or investments -- seems better than it did to me before (and I don't think it was insane in the past either; just not something I'd consider the default picture of the future). ↩︎

Though labs that are currently smallish could grow to have massive valuations and a ton of employees and then develop transformative systems, and that seems a lot more likely than that a company would develop TAI while staying small. ↩︎

E.g., it seems like it wouldn’t be hard to argue for a “PONR” in the past, e.g. “alignment is so hard that the fact that we didn’t get started on it 20 years ago means we’re past the point of no return.” Instead it just feels like the difficulty of changing course just gets worse and worse over time, and there are very late-stage opportunities that could still technically help, like “shutting down all the datacenters.” ↩︎

I might have ended up, with this update, near the median of the people I hang out with most, but I could also still be slower than them -- not totally sure. ↩︎

Since longer timelines are harder to think about since the world will have changed more before TAI, less decision-relevant since our actions will have washed out more, less emotionally gripping, etc. ↩︎